Fact: offering customers more choices kills conversion rates.

Fact: you can prime customers to behave in certain ways with certain phrases, images or ideas.

Researchers have shown it’s true.

Fact: if you give potential customers a small gift, you’ll create a debt that customers will eagerly repay.

Robert Cialdini wrote about that years ago in his popular book, Influence: The Psychology of Persuasion.

These ideas (and dozens of others) are so widely accepted that almost no one questions them.

But maybe we should.

That study about choices? Sometimes decreasing choices doesn’t increase response.

And that stuff about priming?

Well, scientists have struggled to reproduce the effects of several studies about priming, including this notorious study (mentioned by Malcolm Gladwell in his book Blink) where participants were “primed” with words like “Florida” and “bingo” then were observed walking more slowly—like the elderly.

But we can still trust Cialdini, right?

Table of contents

- Why You Can’t Trust Everything Your Scientist Tells You.

- 1. Sometimes Researchers Make Honest Mistakes.

- 2. Sometimes Scientists Make Stuff Up.

- 3. Sometimes The Sample Size or Positive Effect Is Too Small.

- 4. Lots of Research Simply Can’t Be Replicated.

- 5. Negative Results Don’t Get Published.

- 6. Sometimes What’s Touted as “Science” Isn’t.

- 7. What Happens in a Lab or MRI Machine Isn’t The Same Thing that Happens on Your Site.

- So Does This Really Mean We Can’t Trust Scientific Research?

- Conclusion

Why You Can’t Trust Everything Your Scientist Tells You.

Over the past year or two, scientific research has come under increasing criticism for how it is conducted and promoted. From the way studies are designed to dependence on grant money from government and private industry, even scientists are starting to talk about the problems with their research.

From an optimizer’s perspective, there are several reasons to be cautious when reading about the latest scientific discovery that identifies a new way to get customers to act. Things like…

1. Sometimes Researchers Make Honest Mistakes.

Nobody’s perfect.

And designing a study that controls for all the right inputs, includes enough participants to achieve statistical reliability, eliminates bias and false positives, and is replicable is exceptionally difficult.

In fact, it is so difficult that in 2005, scientist John Ioannidis published a paper with the title: Why Most Published Research Findings are False. In it he says,

“There is increasing concern that in modern research, false findings may be the majority or even the vast majority of published research claims. However, this should not be surprising. It can be proven that most claimed research findings are false.” [Emphasis added.]

Ioannidis outlines several areas where researchers commonly make mistakes. Often a model doesn’t account for all the possible inputs or doesn’t reach the appropriate statistical power. Other times researchers are unable to overcome their own bias, leading them to miss, bury or ignore significant findings. And research teams almost always work alone, interpreting results in isolation—meaning there’s no immediate feedback on their work as it progresses.

A review published in the Proceedings of the National Academy of Sciences found that of 2,047 studies retracted over the review period, 21.3% were retracted because the researchers made an honest mistake in their work.

Mistakes happen. And sometimes they get published and promoted as “the latest scientific breakthrough”.

2. Sometimes Scientists Make Stuff Up.

That review I linked to above? It also reports that 43.4% of those retracted papers were pulled because of fraud or misconduct. Researchers fudging the numbers to deliver the results they need. Lab assistants adding false data to save time and work. Scientists compromising the peer review process. If they worked on Wall Street, some of these guys would be in jail.

And the amount of fraud is alarming—the percentage of papers retracted because of fraud has increased 10X since 1975.

The team at Retraction Watch has taken on the thankless task of tracking the hundreds of scientific papers retracted each year. These include dozens of retractions and corrections in the fields of psychology, sociology, neuroscience, consumer research and marketing—all fields that contribute to our knowledge of customer behavior.

Why do they cheat?

Money. Job opportunities. Publicity.

Academia places extraordinary pressure on scientists to do ground-breaking research in a “publish or perish” environment. Millions of dollars in research grants go to scientists with a track record of publishing positive results. Add to that the notoriety that comes when the media reports on an incredible result. It creates a powerful motivation to cook the data.

This might sound familiar to a lot of optimizers who experience similar pressure with their own research and tests.

3. Sometimes The Sample Size or Positive Effect Is Too Small.

Optimizers are familiar with the challenges of testing and statistical significance. To do it successfully, you need a large number of participants, which is difficult for any study, but even more difficult for research involving fMRI machines used in many of the studies looking for a neurological response to certain inputs (marketing ideas, storytelling, brand preference, etc.).

The cost of operating an fMRI machine is high. That means the studies that use them often depend on a small number of participant brain scans to draw their conclusions. It’s not uncommon for a neuroscience study to include 20-30 participants—which is almost certainly too low to draw any real conclusions that would apply to the general population, though some researchers disagree.

But it’s not just neuroscience with this problem. That study about priming that I linked to above? It had just 61 participants.

And the jam study that proved fewer choices increases conversions? Of the 502 customers who walked by, only 249 stopped at the display. And a total of 35 customers purchased.

That doesn’t mean the results are wrong. But it does mean they may not apply universally to the 7 billion inhabitants of the planet. Or your customers.

Similarly, just because a study reaches statistical significance, doesn’t mean that the effect of the study is meaningful. A statistically significant, but trivial effect size will make almost zero difference in the real world outcomes of the research. Relying on that kind of research for your marketing efforts would be a waste of time.

4. Lots of Research Simply Can’t Be Replicated.

Every year there are tens of thousands of new studies published in thousands of research journals. Almost all of it is original research. There are very few incentives for scientists to conduct research with the sole purpose of making sure a previous study is correct.

But with the large number of retractions every year (roughly 500 and increasing), there’s a growing movement to try to replicate the findings.

Unfortunately, the results of this movement are alarming.

Last year a team of researchers reported on their efforts to reproduce the findings of 100 studies published in three high-ranking psychology journals. They were only able to reproduce the results of 39 of them. What’s more, two thirds of the researchers surveyed by Nature in a separate survey said that the lack of reproducibility is a major problem for science today.

5. Negative Results Don’t Get Published.

When was the last time you read a headline like this?

Researchers prove coffee has no effect on cancer rates.

Or, more appropriately for the optimizers who read CXL…

New study shows price rounding makes no difference in conversions.

It doesn’t happen.

Or rather, it doesn’t happen very often.

In 2012, Daniele Fanelli published a paper titled, “Negative results are disappearing from most disciplines and countries” in which she analyzed 4,600 scientific papers published between 1990 and 2007 to measure the frequency of positive results. She reported that:

“The overall frequency of positive supports has grown by over 22%…”

Here’s the problem. Researchers often learn more from studies that fail, than those that succeed. But it’s more difficult to publish those studies. For obvious reasons, this impacts research design. Worse, scientists who pursue studies with negative findings receive less grant money and publish fewer papers.

Optimizers face a similar problem. We focus on tactics and ideas that increase conversions. As Dr. Brian Cugelman noted here, optimizers “are completely incapable of studying what factors are correlated with negative behavioral outcomes.”

That’s not necessarily bad, but it does mean we often don’t understand the factors that might depress response and we don’t get the full picture as we conduct our tests.

6. Sometimes What’s Touted as “Science” Isn’t.

It’s pretty easy to write the words, “studies show” and plenty of people do.

As in…

“Studies show that green buttons are clicked twice as often as red.”

Doesn’t make it true.

Another example…

Have you seen one of the many articles or infographics about color psychology that say the color red will make you hungry?

Pages like this, this and this repeat that claim “according to research results”.

But good luck finding the research.

I spent two days looking. Nada.

There’s a study that says Nile Talapia fish will eat more in red light. But it’s quite a stretch to apply that to restaurant customers.

And contrary to the claim, there’s at least one study that suggests people eat less off red plates and drink less out of red cups.

If red can make you hungry, science doesn’t seem to be in any hurry to show it.

But that doesn’t stop people from repeating this claim as science.

Or food-industry designers using red with the mistaken assumption they are driving customer behavior.

Just because someone writes “according to the research” doesn’t mean there’s any actual research to show for it.

7. What Happens in a Lab or MRI Machine Isn’t The Same Thing that Happens on Your Site.

Well designed studies usually take place in controlled conditions that are radically different from the situation your customers are in. Let’s go back to the jam study. Shoppers were exposed to a table with a selection of jams, then encouraged by an assistant to find the jams on the shelf elsewhere in the store.

Is that how your customers add products to the shopping cart on your site?

It’s doubtful.

The online experience is completely different, which means the results from this study may not apply to your business. Your customers will act differently.

Most priming research involves exposing test participants to a certain “prime” like certain words or a subliminal prompt, then observing their behavior afterwards. Does this sound like your marketing efforts?

Probably not.

And remember how Cialdini tells the story of Hari Krishnas giving a small flower to people at the airport, then asking for a donation? People give in part because the gift creates a social obligation. But is that how you reach out to your customers—with a free flower, in an airport, asking for a small donation?

Definitely not.

Which means that what researchers find in the lab (and in their field studies) may not be directly applicable to your email campaign, sales funnel or landing page.

So Does This Really Mean We Can’t Trust Scientific Research?

Not at all.

It doesn’t mean that all scientists fudge the numbers. In fact, very few do.

It doesn’t mean that all research is bad.

And it doesn’t mean that we can’t learn from it (even the bad studies).

The vast majority of researchers are good people trying their best to understand very complex subjects.

But it does suggest that we need to understand what the science actually says before we treat it as the solution to our marketing or optimization problems. No one should assume that what works in the lab, will work on a website. Nor should they assume that because something works for another site or in a UX lab, that it will work on your site too.

So what to do?

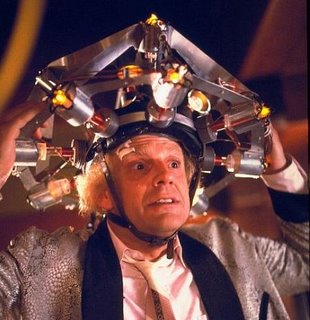

Imagine this scenario: your CEO or client sees a study that reports pictures of smiling faces create positive feelings in customers. Immediately he sends an email to the marketing team asking them to replace all photos on the website with smiling people.

Because science.

Stop.

Instead of a reactive approach to every new (and possibly dubious) research finding, following a process will help ensure customer needs drive what you do. Your process should look something like this…

1. Start With a Problem.

What are you trying to accomplish? If you just want to make your customers feel happier, changing out the photos on your website may be enough. But happier customers don’t necessarily buy more.

Don’t start with the science. Start with your marketing goals.

Ask, what do we need to do? Increase customer responses to a drip campaign? Improve customer click-throughs on your squeeze page? Pull customers from your Facebook page back to your blog? Drive conversions on your landing page?

2. Understand What’s Keeping You From Reaching that Goal Now.

Why aren’t your potential customers doing that now? Identify the elements in your marketing that cause friction.

You need to understand your customer and why they aren’t doing what you want them to do. Are you using the right words so they feel understood? Are there other elements on the page that distract them from the goal?

Are they confused by too many options or calls-to-action? Do they need social proof or a guarantee to help eliminate risk? Are you offering too many choices? Too few? Or perhaps they don’t feel the pain your product solves.

A great way to find this out is qualitative research. Whether you use onpage surveys, phone-interviews, user tests, or some other approach—you should not guess what your customer is thinking. You need to do the research.

3. Use Science to Inform Your Strategy and Tactics.

Once you better understand your customer needs and why they behave the way they do, it’s time to let science inform your thinking.

Maybe you need to drive conversions on your landing page and think what Cialdini wrote about creating scarcity in Influence will do the trick.

Or, you need to increase engagement with your email campaign and believe that the ideas about enhancing personalization in Roger Dooley’s book, Brainfluence, will help solve the problem.

Maybe you need to move customers from your social media page onto your website and the ideas you got from the CXL blog about cognitive bias could provide the solution.

Whatever it is, this is where science can make a huge difference, by providing new ideas to test with your customers. But the marketing needs should drive the science you use, not the other way around.

4. Test. Learn. Then Test Again.

No matter how well you understand what your customer is thinking… no matter how good your strategy is… no matter what the science says about changing human behavior… you must test your tactics and evaluate what happens. Then make changes and test again.

Conclusion

Actually, marketers need more science, not less.

I started out with some of the reasons we need to be wary of scientific research. All true. But that doesn’t mean we should ignore it.

In fact, most optimizers should spend more time reviewing the research in a variety of fields like psychology, human behavior, sociology and neuroscience. That’s where you’ll find dozens of new ideas for tests that can help you better connect with your customers and meet their needs.

If you’re wondering how to tell the good science from the bad, start with research that’s peer-reviewed. Look for studies that demonstrate a significant effect (not just a statistically significant results). When possible, look for research with large sample sizes. Try to learn who sponsored the research. And, most importantly, test the research findings before you assume you will see a similar effect.

Done right, it’s a bit like hiring several thousand researchers to send you a steady stream of ideas to consider. Can you afford to ignore them?

Note: don’t have time to follow all the research out there? CXL Institute does it for you, then presents you with the best ideas so you can test and put them to work. Check it out here.

I guess the most important article on this blog, thank you so much as sometimes it seems that critical thinking is totally missing (and I have a lot of respects for Peep for his conscientious approach).

I drives me mad to see all the “variant B got 286% lift in conversions” claims without including at least the sample size. Or conversion experts trying to persuade ppl about their “scientific” approach by wearing a laboratory coat.

Thanks for the kind words about how we’re approaching content at CXL. Really appreciated.

Who knew that offering customers more (choices) would kill conversion rates? I didn’t, 3 years ago, and I tried just that and failed miserably. So I tend to believe this is true but, and this is a big BUT, it depends on how many actual choices you are giving them. Too many and they get frustrated and this will kill conversion rates. But I think anything under 5 choices should be fine, with the best number being 3 followed by 4. I tested this and it is true for me most of the time.

Hey Jonathan,

Thanks for your comment. I’m glad you learned the right number of options for your customers. I wish it were as easy as saying “more choices kills conversions”. The truth is sometimes it does and sometimes it doesn’t. Every situation is different so you need to test the options to see what your customers will respond to.

As Peep has said before, nothing is ever “finally” proven. Things change and evolve. What works in one situation doesn’t work in others. So we need to apply new ideas and adjust tactics to evolve with the changes. As we do that, science can provide a bunch of ideas for testing and improving.

-rm