Conversion optimization is hard, but the heaviest lifting is often organizational – changing an organization from a gut-based approach to one driven by data and experimentation.

Picture, perhaps, a Director of Marketing at a large, stable company with a solid brand. She’s on the cutting edge of digital marketing, reads articles like this and wants to implement a testing culture within her team and organization. Problem is, HiPPOs at her company are staunchly opposed due to a variety of entrenched ideas and political woes.

Or perhaps you’re heading growth at a fast-growing startup and want to build a culture of testing and continuous data-driven growth. How can you build a culture of experimentation, when some executives opinions are so strong and their beliefs so entrenched?

How Small Tweaks Can Bring Big Change

Richard Thaler and and Cass Sunstein wrote in Nudge about a concept called “choice architecture” – swaying decisions towards the best option through a variety of small tweaks called “nudges.” Things as seemingly insignificant as making organ donorship the default (as opposed to needing to opt-in) create huge changes in results.

Similarly, in Switch, Chip and Dan Heath wrote that, “Big-picture, hands-off leadership isn’t likely to work in a change situation, because the hardest part of change – the paralyzing part – is precisely in the details.” They detail many such examples, like Microsoft requiring developers to program on the same machines customers use to facilitate better usability for the end user, where small changes create big results.

There are many tactics you can use to gently sway your organization to become more experimentation driven. Here are 5 ideas…

Note: It’s really difficult to describe in precise terms what a ‘culture of experimentation’ is, but I’ll try. For this article, it will mean a culture that is predicated on a belief that there aren’t any sacred cows in terms of the way things are done or designed. An opinion, no matter how highly paid, is still an opinion – instead, an organization makes data-informed decision. In a culture of experimentation, failure isn’t punished, insights and learning are rewarded, and team members are encouraged to innovate and discover new ways of growth.

1. Script the Critical Moves

To begin, it’s important to clearly define what a ‘culture of experimentation’ looks like. Change can only occur with the participation of individuals and their behavior, but that’s a hard place to start because that’s where friction occurs. In Switch, Chip and Dan Heath wrote:

“To spark movement in a new direction, you need to provide crystal-clear guidance. That’s why scripting is important – you’ve got to think about the specific behavior that you’d want to see in a tough moment, whether the tough moment takes place in a Brazilian railroad system or late at night in your own snack-packed pantry.”

An idea for optimization: make a list of 4-8 rules to guide your optimization process. For example, Andrew Anderson, Head of Optimization at Malwarebytes, has the following guidelines for a discipline-based testing program:

- All Test Ideas are Fungible

- More Tests Does Not Equal More Money

- It Is Always About Efficiency

- Discovery is Part of Efficiency

- Type 1 Errors are the Worst Possible Outcome

- Don’t Target just for the Sake of It

- The Least Efficient Part of Optimization is the People (Yourself Included)

He also mentions that you should proactively generate roadmaps and focus areas. While this isn’t a small amount of work, investing in a winning process ensures a successful testing program (and there’s no better way to get buy-in than to produce ROI).

Specific, transparent, and publicly displayed choice architecture ensures clarity, and as Chip and Dan Heath wrote, “Clarity dissolves resistance.”

Clarity can be achieved in many different ways. Wherever you can script the critical moves and design the path with clarity, you should. One example that comes to mind is metrics. What’s the one metric of success that will define your tests? Defining it ahead of time will help eliminate political upheaval in the case of an ambiguous test.

Twitter does this to prevent HARKing. As they wrote:

“One way we guide experimenters away from cherry-picking is by requiring them to explicitly specify the metrics they expect to move during the set-up phase. Experimenters can track as many metrics as they like, but only a few can be explicitly marked in this way. The tool then displays those metrics prominently in the result page. An experimenter is free to explore all the other collected data and make new hypotheses, but the initial claim is set and can be easily examined.”

Matt Gershoff, CEO of Conductrics, also advises clarity upfront when it comes to expectations.

Matt Gershoff:

“Before doing anything, the team should first articulate what the expected gain in terms of value (revenue, gross profit) might be in the best case, and what the loss might be in the worse. This way you can filter out ideas that even in the best case really won’t have much of an impact to the business as well as ideas that have a good chance of failing badly, which could demoralize the organization.”

2. Commit To a Testing Cadence

When beginning a testing program, a lack of results can be frustrating, but Sean Ellis, founder of GrowthHackers, has found success in planning and maintaining a specific test cadence. Here’s he explained it:

Sean Ellis:

“For me the main thing that creates a culture of experimentation is committing to a testing cadence and sharing results. In the beginning you may need to be patient to generate results. But I’ve never seen a company run 10+ highly considered tests and not achieve a meaningful improvement. Wins drive buy in and buy in accelerates testing momentum.

So for me, the most important first step is committing to a weekly experiment release schedule. Stick with it for at least a month. Sharing result will drive more company wide participation. Over time you’ll find that the whole process is pretty addictive. But it requires a commitment and perseverance in the beginning.”

This is important in the beginning, but also for maintain momentum as your program matures. As Peep wrote, your impact is determined by three factors:

- The number of tests you run

- The number of winning tests

- And by how much each test wins

While tempo alone doesn’t affect the latter two (this article tells you how to have more winning tests, and larger wins), it assures you’ll enough tests to make a good impact. And it ensures some wins – important for buy-in, especially early on.

3. Make it a Game; Trigger Healthy Competition

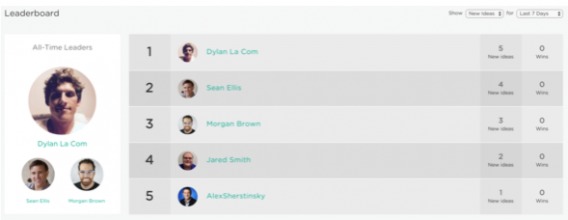

Using Projects by Growth Hackers, I noticed they had a feature called the “leaderboard.” This lets you track which members of your team are submitting the most test ideas, as well as which team members have the most wins.

While not everyone agrees that putting a focus on test ideas is efficient, competition has been known to increase productivity in certain settings. In Growth Hackers’ own experience, they found that it increased the volume of test ideas submitted per week:

“After the ideation started to slow down, we decided to add a leaderboard to celebrate the people who were generating the most ideas. The leaderboard re-energized the ideation process, so that we are now adding about 2 – 3X more ideas per week than we can test. Therefore, we are continuing to grow our backlog of hundreds of ideas.”

It’s not just about competition, either. Social influence is a trigger here as well. As Thaler and Sunstein wrote in Nudge, “The general lesson is clear. If choice architects want to shift behavior and to do so with a nudge, they might simply inform people about what other people are doing.”

Note that volume of ideas isn’t a metric that will be important to everyone. Volume of growth ideas for a fast growing startup? Totally awesome. For our internal growth at CXL, the more ideas the better.

But for landing page optimization on a high traffic website? Sure, the more tests the better, but only if they are efficient and don’t incur opportunity costs (ie you’re testing dumb things and could be testing things of higher impact). Projects by Growth Hackers also includes prioritization of ideas, so don’t take this trigger as a green light to try all 100 ideas on your “100 A/B test ideas to try right now” article.

4. Celebrate Failure Publicly and Often

In the early stages of developing a testing culture, your program is much more susceptible to disintegration with the arrival of any mishaps. That could be any number of things…failure to move the needle, failure to bring key stakeholders on board, failure to generate excitement, failure to renew your testing tool, and failure in its broadest sense.

To combat that, failure, or at least the insight gained from it, needs to be something that is celebrated.

Joanna Lord, Marketing Executive and Tech Advisor, talked at CTA Conference 2015 about a variety of ways she implemented this in her previous role at Porch. One way of celebrating failure was by drawing attention to it…

Joanna Lord:

“At Porch, we do a lot of really bizarre things. One thing is we have this pink, pony-dog-creature-fuzzy-animal thing – not alive, that would be cool – named Mr. Sparkles.

And every time you break the site, whoever breaks the site the worst gets Mr Sparkles for a week. You put him on your desk and it’s like this badge of honor that you like did something so bold that you literally messed up the site badly.

And you know what I love? You see my CEO walk around the room and he’s high-fiving the Mr. Sparkles owner. And people are like, “What did you do? What did you do to get Mr. Sparkles?

But the reality is we’ve made it a positive thing. We’ve made it a badge of honor. You are living out the Porch-y way in being bold. What can you do in your culture to make it fun and acceptable? And almost, you know, become famous for it.”

While constant test failure isn’t ideal (it’s probably a sign you’re not doing adequate conversion research), new members shouldn’t feel fear at a fast growing company. Innovation can be vanquished pretty quickly in a state of underlying fear.

5. Embed Triggers Into Your Current Internal Communications

In her CTA Conference talk, Joanna mentioned another tactic they used at Porch to trigger a testing culture. Two tactics, actually, both related, and both embedded into their current internal communications.

First tactic is that they sent out weekly test roundups. These included first and foremost, the insights gained from tests, both from winners and losers. All of the other information fell below that, including whether the test one or lost. Not only does this keep communication transparent, but it spurs social influence in the organization by allowing the organization to see the scope of testing and the insights gained.

Leading with insight also changes the conversation, leading to more action instead of conceptual discussion.

The other tactic Joanna mentioned was a weekly meeting where they discuss tests:

Joanna Lord:

“We have a meeting every Friday – we call it Around-The-Porch – where we call out the tests, both winners and losers, and there’s clapping and there’s celebration.

So-and-so ran a test, that’s why the site was down X number of hours. It was really bad – good try on the test though! Here’s what we learned..and the site’s back up now…And you know, it can be uncomfortable and awkward but it’s so good.

And when you’re bringing in new people as fast as Porch is or some of your companies, their first week in, they see people failing, and getting clapped at…? Like, this is gonna be great! I think you have to change the shift and really just shake things up.”

This is a great way to celebrate testing, winners and losers, and it’s a great way to trigger organizational support. It makes experimentation salient because it’s embedded into existing meeting structures.

Even better, if you use a workflow management tool like Projects by Growth Hackers, you can integrate that with Slack and see all of the updates in a channel. You’re on Slack all day anyway, and seeing people add ideas to the backlog, or seeing test results ready to be analyzed, triggers other team members to think about experimentation.

Sean Ellis, who spoke above about implementing the leaderboard within Projects, says that was just one of many triggers he found to be effective:

Sean Ellis:

“As for the leaderboard, it’s just one of many triggers that keep people accountable to always be on the lookout for more ways to improve results. For example I started my day yesterday entering three ideas for tests. I got a thrill entering these ideas and it seems to have triggered a handful of additional ideas from other team members (the whole team was notified of my ideas over Slack).”

6. Prime Your Team Each Week With a 5 Minute Plan

Joanna mentioned both weekly digests and weekly meetings used to call attention to tests. Here’s another idea for a weekly ritual, one that will both analyze the progress of your testing program and will encourage its prosperity…

Usually, when researchers send out surveys, they’re simply looking for insights. But in certain cases, social scientists have discovered an odd phenomenon: when they measure people’s intentions, it affects how people subsequently act. This is known as the “mere-measurement effect.”

Thaler and Sunstein consider this a nudge in the most accurate sense. For example, they noted that “if people are asked whether they intend to eat certain foods, to diet, or to exercise, their answers to the questions will affect their behavior.” They also offered fascinating examples of this around voting, flossing, and fatty foods:

“It turns out that if you ask people, the day before the election, whether they intend to vote, you can increase the probability of their voting by as much as 25%…If people are asked how often they expect to floss their teeth in the next week, they floss more. If people are asked whether they intend to consume fatty foods in the next week, they consume less in the way of fatty foods.”

So why not implement a 15five style survey into your weekly digest? You could ask questions like these (feel free to come up with your own):

- I feel that I’m contributing to our testing culture in a meaningful way (scored 1-10)

- I feel like our team successfully makes data-driven decisions (scored 1-10)

- What is blocking you, if anything, this week?

- Do you have any ideas for improving our growth process?

- What’s something you and your team did well this week?

Note: you don’t have to ask those exact questions, I clearly just riffed quickly on them. But you get the point. Do a survey like this, focusing on the right things of course, and you can collect great management data as well as enact the mere measurement effect, leading your team to be more data-driven and enthusiastic about testing.

Andrew Anderson, mentioned that talking about the right things – focus areas, scope of a test – reiterates their value and makes it harder to fall into traps:

Andrew Anderson:

“This is why talking about only focus areas and the scope of a test is so important. If you make it clear the discipline is what matters and not the specifics of one experience, or even worse the specific story or “hypothesis” that someone has, the harder you make it for others to fall into those traps.”

Conclusion

Organizational culture is a large and nuanced topic. This article will not, by itself, implement an efficient testing culture into your organization. That requires a variety of factors, not least the simple matter of having a team or a few allies that believe in the value of testing.

However, as has been shown in many arenas, small nudges can lead to big results, and some successful marketers have implemented some clever ideas to this effect. In summary, here are the nudges this article mentioned:

- Script the Critical Moves (i.e. clearly and publicly define the path and goals)

- Commit To a Testing Cadence

- Make it a Game; Trigger Healthy Competition

- Celebrate Failure Publicly and Often

- Embed Triggers Into Your Current Internal Communications

- Prime Your Team Each Week With a 5 Minute Survey/Plan

All of these won’t work for all organizations, but some might work for you. Also, you can always get creative – take some of the underlying principles of nudges (defaults, social influence, transparency, etc), and come up with your ideas.

Actually, if you have any creative ideas already in action, I’d love to hear them in the comments below!

Alex,

Great content! I appreciate the words by other experts and how you pulled it all together.

Our team uses the mantra Build Small. Learn Fast. Iterate Often to help keep us in the culture of experimentation. For us, combining agile methodologies, lean startup philosophies and design thinking we’ve come up with a winning formula.

Thanks,

Seth